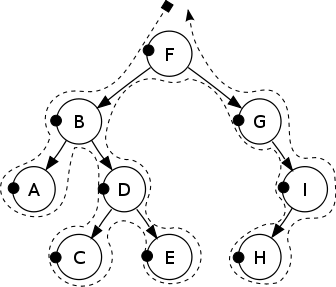

The graph above is a brief summary to three sorting method, generally speaking, it seems that the least efficiency should be selection sort...

According ti the code and example given during the lecture (http://www.cdf.toronto.edu/~heap/148/W14/Lectures/dustin/W10/sort.py), the result is

Random list generated

Example code in python:

def select(L):

"""Produce copy of L in sorted order by selection sort

"""

L1 = L[:]

for i in range(len(L1)):

m = min(L1[i:])

j = L1.index(m,i)

L1[i], L1[j] = L1[j], L1[i]

return L1

def merge_sort(L):

"""Produce copy of L in non-decreasing order

>>> merge_sort([1, 5, 3, 4, 2])

[1, 2, 3, 4, 5]

"""

if len(L) < 2 :

return L

else :

left_sublist = L[:len(L)//2]

right_sublist = L[len(L)//2:]

return merge2(merge_sort(left_sublist),

merge_sort(right_sublist))

def quick(L):

if len(L) > 1:

pivot = L[0] (*)

smaller_than_pivot = [x for x in L[1:] if x < pivot]

larger_than_pivot = [x for x in L[1:] if x >= pivot]

return quick(smaller_than_pivot) + [pivot] + quick(larger_than_pivot)

else:

return L

FOR 1000 ITEMS:

0.029001951217651367 - select sort

0.004999876022338867 - quick sort

0.10900616645812988 - quick sort on already-sorted list

0.008000850677490234 - merge sort

0.0010001659393310547 - built-in sort

0.0009999275207519531 - count_sort

This result gives a opposite conclusion...When I try more, the result varies

FOR 1000 ITEMS:

0.030001163482666016 - select sort

0.00400090217590332 - quick sort

0.11100602149963379 - quick sort on already-sorted list

0.009001016616821289 - merge sort

0.0 - built-in sort

0.0009999275207519531 - count_sort

However, if change the * to pivot = L[(len(L)//2)], the efficiency of quick sort on already-sorted list has improved. This is because when choosing the middle one as the pivot for the sorted list, the elements before pivot are both smaller than pivot, there is no need for recursion.

FOR 1000 ITEMS:

0.031002044677734375 - select sort

0.005000114440917969 - quick sort

0.00400090217590332 - quick sort on already-sorted list

0.00800013542175293 - merge sort

0.0 - built-in sort

0.0009999275207519531 - count_sort

Quick sort becomes more efficiency. From another website, http://cs.stackexchange.com/questions/3/why-is-quicksort-better-than-other-sorting-algorithms-in-practice

- Quicksort:

2nln(n) comparisons and13nln(n) swaps on average - Mergesort:

1.44nln(n) comparisons, but up to8.66nln(n) array accesses (mergesort is not swap based, so we cannot count that). - Insertionsort:

14n2 comparisons and14n2 swaps on average.

It seems that the type of input can change the efficiency. For example, an input in reverse-sorted order renders the quick sort very inefficient, whereas it may not have as big an impact on other sorts, which was another thing I had never know of from previous experience. In such cases, randomizing the input, or increasing the max-recursion limit can be very important.

A website found useful: http://www.cs.princeton.edu/courses/archive/spr07/cos226/lectures/04MergeQuick.pdf